Fine Tuning Transformer

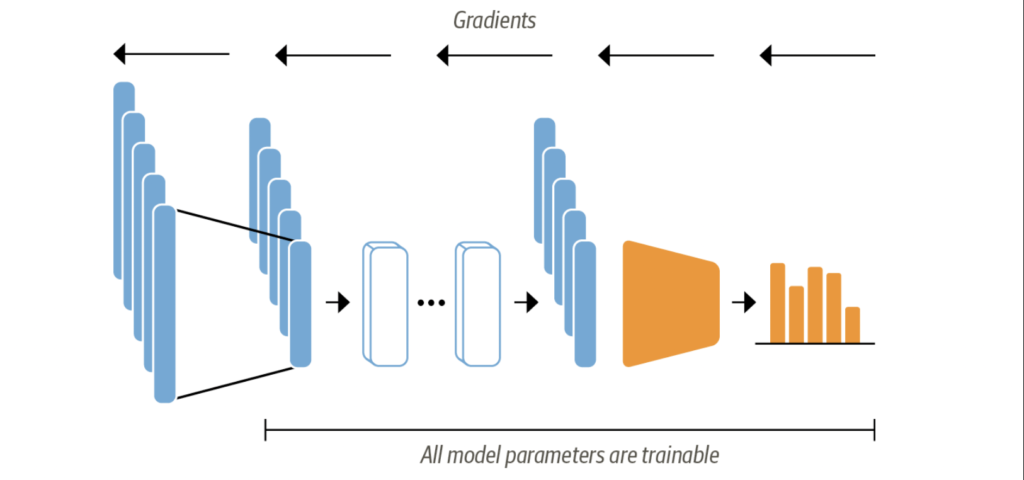

In the fine-tuning approach, instead of utilizing the hidden states as static features, we actively train them. This necessitates the classification head to be capable of differentiation, leading to the typical use of a neural network for the classification task.

We will use a pre-trained model in this approach as well, the only slight modification used here is we will use AutoModelForSequenceClassification model instead of AutoModel. The distinction lies in the fact that the AutoModelForSequenceClassification includes a classification layer built on top of the outputs of the pre-trained model, allowing for straightforward training in conjunction with the base model.

from transformers import AutoModelForSequenceClassification

num_labels = 6

model = (AutoModelForSequenceClassification

.from_pretrained(model_ckpt, num_labels=num_labels)

.to(device))While training the model, evaluation is important to monitor how the model is performing. Let’s define a function which will measure our model’s performance

from sklearn.metrics import accuracy_score, f1_score

def compute_metrics(pred):

labels = pred.label_ids

preds = pred.predictions.argmax(-1)

f1 = f1_score(labels, preds, average="weighted")

acc = accuracy_score(labels, preds)

return {"accuracy": acc, "f1": f1}Now that we have prepared our ammunition and shield, let’s jump into the battle of training

from transformers import Trainer, TrainingArguments

batch_size = 64

logging_steps = len(emotions_encoded["train"]) // batch_size

model_name = f"{model_ckpt}-finetuned-emotion"

training_args = TrainingArguments(output_dir=model_name,

num_train_epochs=2,

learning_rate=2e-5,

per_device_train_batch_size=batch_size,

per_device_eval_batch_size=batch_size,

weight_decay=0.01,

evaluation_strategy="epoch",

disable_tqdm=False,

logging_steps=logging_steps,

push_to_hub=True,

log_level="error")In this process, we define the batch size, learning rate, and number of training epochs, and also configure the settings to load the most effective model upon the completion of the training. With these settings in place, we are now equipped to initialize and fine-tune our model using the Trainer.

from transformers import Trainer

trainer = Trainer(model=model, args=training_args,

compute_metrics=compute_metrics,

train_dataset=emotions_encoded["train"],

eval_dataset=emotions_encoded["validation"],

tokenizer=tokenizer)

trainer.train();